Server Farm Setup for Knife Depot

July 2nd, 2015 (5 minute read)

OK… the title is a little misleading. This post actually discusses the setup for my entire company, Internet Retail Connection. We run a few other sites, like Picnic World and Backyard Chirper, but Knife Depot is our bread and butter.

A very abridged background story is that we run our own ecommerce websites on a custom platform developed over the last 10 years. It started as a legacy platform that ran on PHP 4 (yikes) and is now almost completely run on the Symfony Framework. We have unique business requirements that make a custom platform the best choice for us.

Our Old Setup

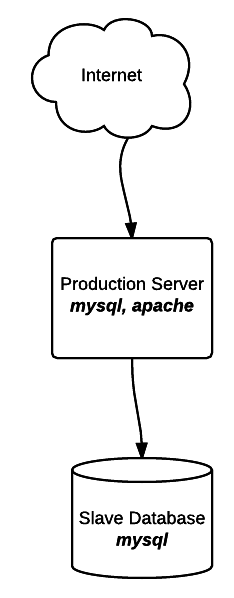

For a long time, we ran our entire business on two machines:

- A single dedicated server at WestHost

- A VM at VPS.net

The VM at VPS.net was only used as a MySQL slave for backup purposes, so we pretty much ran everything on one machine.

The huge benefit of this server farm setup is it’s simplicity. Everything is on one machine, so it’s really easy to monitor, maintain, deploy code, etc. The KISS principle really applies here.

The big downside is that it’s too simple and it’s a single point of failure. If a hard drive died, the entire infrastructure goes down. Need to take down a web server for maintenance? The entire infrastructure goes down. Suddenly getting a lot of traffic? Impossible to scale up quickly.

Needless to say, it was time for a change, and I wanted to do it on my timetable and not during an emergency. After a bunch of research, I decided to move over to the cloud servers on DigitalOcean, although AWS was a close second.

Moving To A Multi-Server Setup

I wanted to have multiple web/application servers, but our current application was designed to run on a single server. These were the primary roadblocks to having a multi-tier web application.

Assets (product images, videos)

These need to be available to all web servers. To solve this, we moved them to Amazon S3 and serve them via Amazon’s Cloudfront CDN.

Session Data

When someone browses our site, we keep track of certain information (which products they viewed, items in their cart, etc) via a session. Traditionally, session data is stored as a file on the web server. Since someone browsing our site might be directed to different web servers in the same session, this doesn’t work.

At first, I tried configuring Varnish (load balancer) to use sticky sessions and always send the same customer to the same application server. This didn’t work very well because some ISPs dynamically change their customers’ IP addresses. If a customer would get directed to a different application server, they would lose their session. This manifested itself by phone calls and emails from customers telling us that their cart contents were “lost”. Not good.

Next, I tried storing the session data in MySQL using Symfony’s PDOSessionHandler, but it is broken for high traffic sites.

Finally, I ended up storing the session data in MongoDB using Symfony’s poorly-documented but well-written MongoDBSessionHandler. It works perfectly for this type of storage and is very fast.

Our New Setup

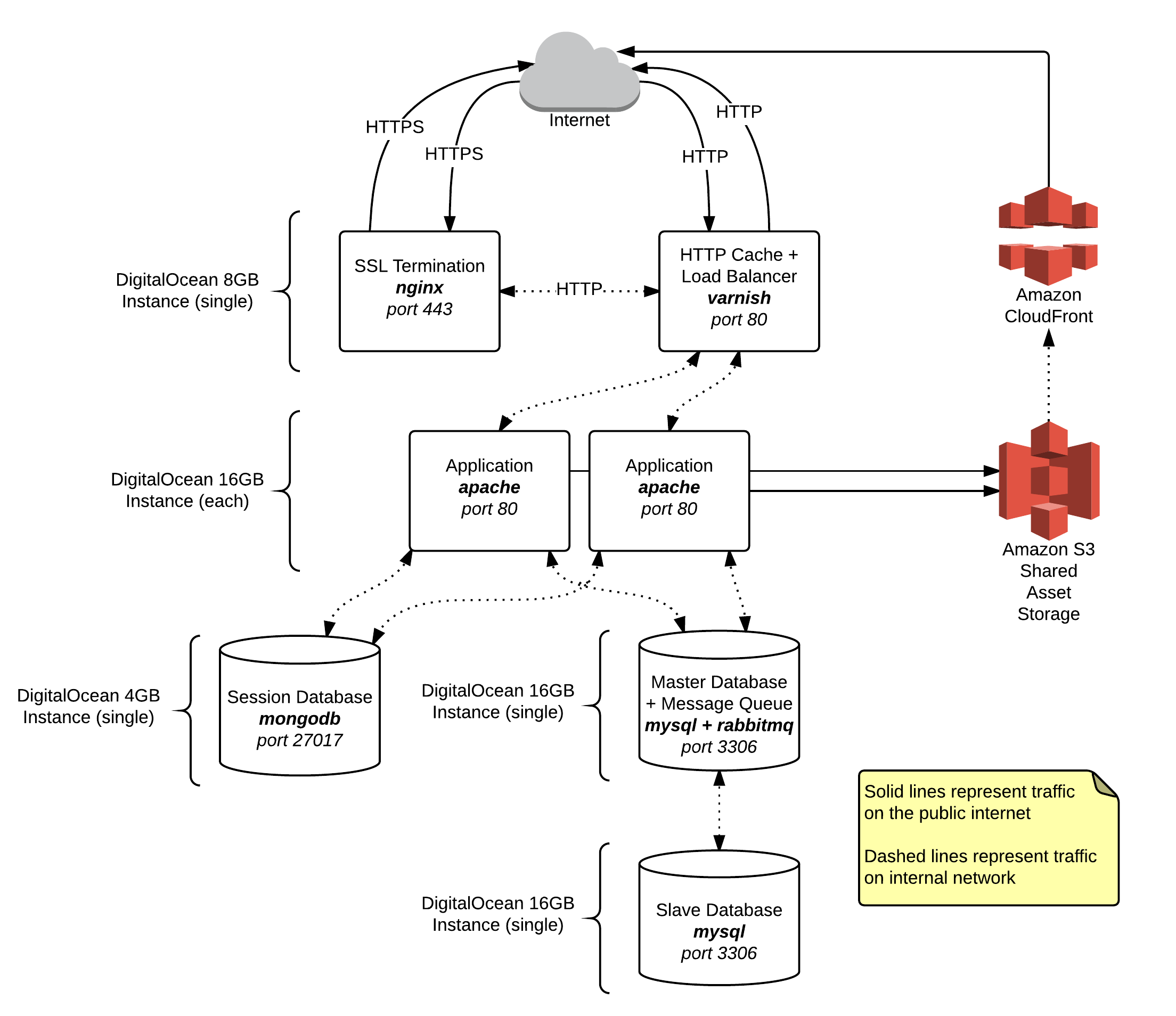

Here is a diagram of our new server farm infrastructure. I’ll explain more below. I’m not going to discuss the actual transition between our old and new setups, because that’s a whole different story. All in all, we only had 1 hour of downtime in the middle of the night. I’m either lucky or I planned well.

Terminating Server (nginx + Varnish)

The only server that is public facing is our terminating server and it runs on a DigitalOcean 8GB Droplets ($80/month). It uses Varnish for caching and load balancing and nginx for SSL termination.

nginx

I wish I didn’t have to use nginx, but at the time of this writing, Varnish doesn’t “speak” SSL. So, nginx is solely used for SSL termination. If you are connecting to one of our sites with HTTPS, nginx will take that connection and convert it to non-encrypted internal traffic on port 80 which it passes to Varnish.

Varnish

Varnish has two purposes. First, it acts as a load balancer between our different web servers, spreading out the traffic evenly among the active servers. Second, it is an HTTP Cache which dramatically decreases page load time on cached pages. It was VERY complicated to get this working with Symfony (I wanted long cache times and selective cache invalidation), but it works unbelievably well. I might write another post about how I got this working.

Application/Web Servers (apache)

These servers are the meat and potatoes of our company. They run the actual code that powers our sites and backend systems. As traffic increases or decreases, we can scale up or down by adding or removing these servers. We use DigitalOcean 16GB Droplets ($160/month), and the number that we use varies with the time of year.

Session Database (MongoDB)

As mentioned above, this server stores shared session data for use by the application servers. We use a single 4GB DigitalOcean Droplet ($40/month).

Master Database (MySQL) + Message Queue (RabbitMQ)

Our main data storage server – We use a single DigitalOcean 16GB Droplet ($160/month).

MySQL

Our data is stored in MySQL and all reads and writes go through this server. That is sufficient for our usage, but the data can be replicated to multiple slave servers if we need to increase capacity.

RabbitMQ

This is a recent addition to our infrastructure, and I love it. We primarily use it to store cache invalidation requests from our application servers, which are handled by consumers on a different server.

Slave Database (MySQL)

This server is a replication slave from our master database. We use it to take hourly database backups without disrupting the production database. We also use it for miscellaneous background jobs, such as the cache invalidation jobs mentioned earlier. We use a single DigitalOcean 16GB Droplet ($160/month).

Monitoring (Scout)

Our new setup is a lot more complicated than our old setup, but it’s also a lot more robust and flexible. Believe it or not, the cost is almost the same. In order to keep tabs on things, I have been using Scout to monitor things and send alerts when the inevitable problems occur.